Introduction

Medical imaging AI sits at one of the most promising intersections of deep learning and clinical practice. The core task is deceptively simple: given a digitized pathology slide, identify and classify regions of diagnostic interest. The execution is not simple at all.

A single whole-slide image can exceed 100,000 x 100,000 pixels. Standard convolutional networks cannot process inputs at this scale. The field has converged on a set of architectural patterns to handle this, and understanding them is prerequisite to building anything that works in production.

How Medical AI Analyzes Pathology Slides

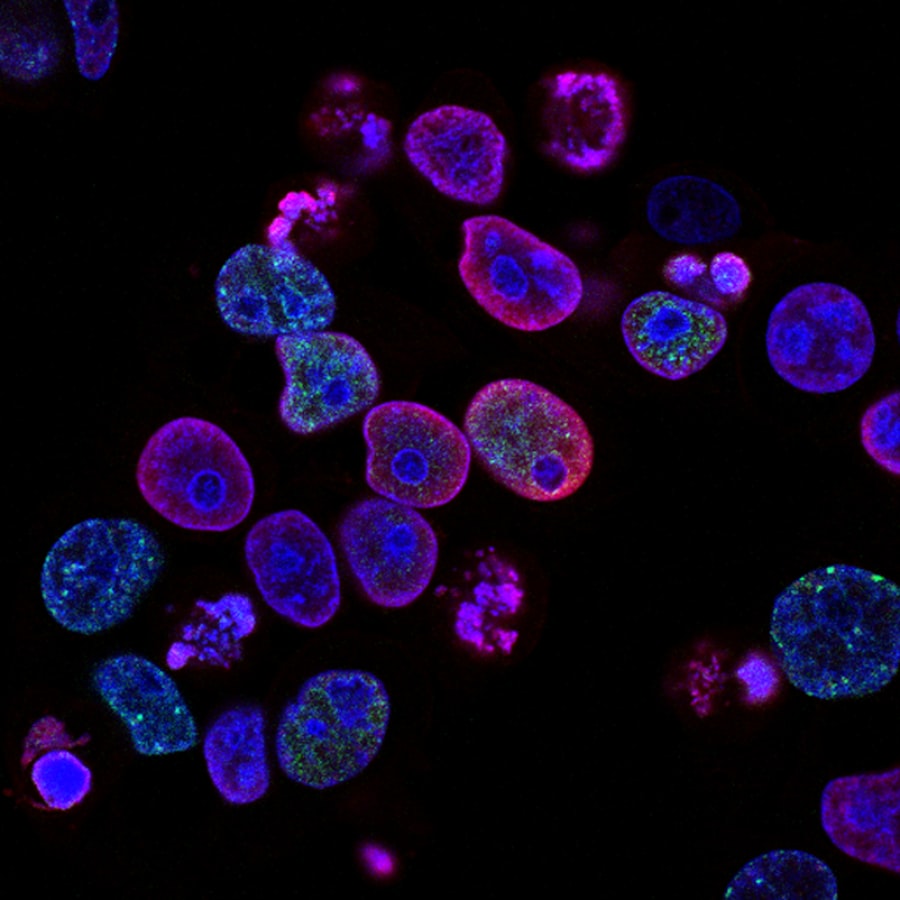

Understanding how medical AI works begins with understanding the data it processes. A digitized pathology slide is not a photograph. It is a pyramidal image, stored at multiple resolution levels, with the highest resolution capturing cellular-level detail across an area that may span several square centimeters of tissue.

The model never sees the full slide at once. Instead, the slide is divided into overlapping tiles, typically 256x256 or 512x512 pixels at 20x or 40x magnification. Each tile is processed independently through a feature extractor, producing a fixed-length embedding vector. The collection of embeddings is then aggregated using an attention mechanism to produce a slide-level prediction.

This mechanism explains two things that otherwise feel arbitrary.

The Core Pipeline Architecture

With the mechanism in place, the pipeline taxonomy becomes intelligible. Each stage is a structured transformation of the raw slide data into a clinically actionable prediction.

HSV or Otsu binarization, though learned segmentation models perform better on staining artifacts.Macenko or Vahadane methods) standardizes color distributions across slides from different scanners and labs.ResNet-50, ViT, or increasingly, pathology-specific foundation models like UNI or CONCH) maps each tile to a dense embedding vector.Approaches to Medical Image Analysis

Medical AI vs. Alternatives: The Diagnostic Guide

| Approach | Root cause it solves | When to use it | The signal that it's time |

|---|---|---|---|

| Multiple Instance Learning | Slide-level labels only, no tile annotations available | Always start here for whole-slide classification | You have slide diagnoses but no pixel-level masks |

| Supervised segmentation | Need precise spatial localization of pathology | After pathologist-annotated masks are available | Clinical workflow requires tumor boundary delineation |

| Foundation model fine-tuning | Limited labeled data for rare pathologies | When pretrained pathology encoders are available | Small dataset but high performance requirements |

| Graph neural networks | Spatial relationships between cells matter for diagnosis | When tissue microenvironment drives the prediction | Tile-level features alone miss inter-cellular context |

In practice, the tile extraction step is straightforward to implement. Here's a simplified version of the core loop:

import openslide

import numpy as np

def extract_tiles(slide_path, tile_size=256, level=0):

slide = openslide.OpenSlide(slide_path)

w, h = slide.level_dimensions[level]

tiles = []

for y in range(0, h, tile_size):

for x in range(0, w, tile_size):

tile = slide.read_region((x, y), level, (tile_size, tile_size))

tile_np = np.array(tile.convert('RGB'))

# Skip background tiles (mostly white)

if tile_np.mean() < 220:

tiles.append((x, y, tile_np))

return tiles

Foundation model fine-tuning vs. training from scratch covers the decision in full. The core rule is that you should not train from scratch until you have thoroughly evaluated pretrained encoders. Because training on a dataset you haven't benchmarked against existing models rarely yields the expected result and makes the underlying failure harder to see.

Key Technical Challenges

Medical AI has a defined set of failure modes. Three technical challenges — distribution shift, label noise, and computational scale — account for the majority of deployment failures.

Distribution shift is the silent killer. A model trained at one hospital can drop 15-20% in accuracy when deployed at another — even on the same cancer type — purely due to differences in scanners and staining protocols.

Distribution shift occurs when a model trained on slides from one institution encounters slides from another. Different scanners, staining protocols, and tissue preparation methods produce systematic visual differences that can degrade model performance dramatically. Stain normalization reduces but does not eliminate this problem.

Label noise is endemic to pathology datasets. Inter-observer agreement among pathologists for certain diagnoses (particularly grading) can be surprisingly low. Training with noisy labels requires either robust loss functions or consensus-based label refinement, both of which add complexity to the training pipeline.

What Changed in 2025-2026

The most significant shift in computational pathology over the past year has been the emergence of pathology foundation models. Models like UNI, CONCH, and Virchow were trained on hundreds of thousands to millions of pathology slides using self-supervised learning. They produce tile embeddings that transfer effectively to downstream tasks with minimal fine-tuning.

Foundation models have fundamentally changed the economics: you need hundreds of labeled slides instead of thousands, making clinical AI feasible for smaller research groups and rare pathologies.

This changes the economics of medical AI development. Previously, building a competitive pathology model required access to large, well-annotated datasets and significant compute for training. Foundation models reduce the data requirement to hundreds rather than thousands of labeled slides, making it feasible for smaller research groups to develop clinically useful models.

Conclusion

Building medical AI for pathology is a systems problem, not just a modeling problem. The pipeline from raw whole-slide images to clinically validated predictions involves tissue detection, tile extraction, feature encoding, attention-based aggregation, and careful evaluation against distribution shift and label noise.

The arrival of foundation models has simplified the feature extraction stage considerably, but the surrounding infrastructure — the data pipelines, evaluation frameworks, and deployment considerations — remains the hard part. The teams that get this right will define the next generation of diagnostic tools.

This is an active area of my research. If you're working on medical imaging AI or want to collaborate, feel free to reach out.